Anthropic's natural language autoencoders translate complex neural activations into human-readable text, offering a breakthrough for auditing enterprise AI security risks in real-time.

Can Anthropic's Natural Language Autoencoders Solve the Black Box Problem?

Enterprise AI security risks have long centered on a deceptively simple problem: you can observe what a model outputs, but you cannot see why it produced that output. For security teams deploying large language models in high-stakes environments, this opacity isn't just philosophically unsatisfying — it's a material vulnerability. Adversarial inputs, hidden biases, and unexpected reasoning chains can propagate through production systems entirely undetected. Anthropic's newly released natural language autoencoders represent the most concrete attempt yet to crack this problem open, translating Claude's internal activations directly into human-readable text explanations that safety engineers can actually audit.

This is not a marginal improvement to existing interpretability tooling. It is a structural shift in how mechanistic transparency can be operationalized at the enterprise level.

The Activation Problem: Why Black Boxes Persist

To understand why this matters, it helps to be precise about what has made neural network interpretability so resistant to progress. Modern transformer-based models like Claude operate through billions of numerical activations — weighted signals propagating through attention heads and feed-forward layers. These activations encode everything the model "knows" about a given input at a given processing step, but in a form that is fundamentally alien to human cognition: high-dimensional floating-point vectors with no inherent semantic labels.

Previous interpretability approaches have attacked this problem from several angles:

- Probing classifiers: Train a small supervised model to predict whether a specific concept is encoded in an activation vector. Useful, but limited to concepts you already know to look for.

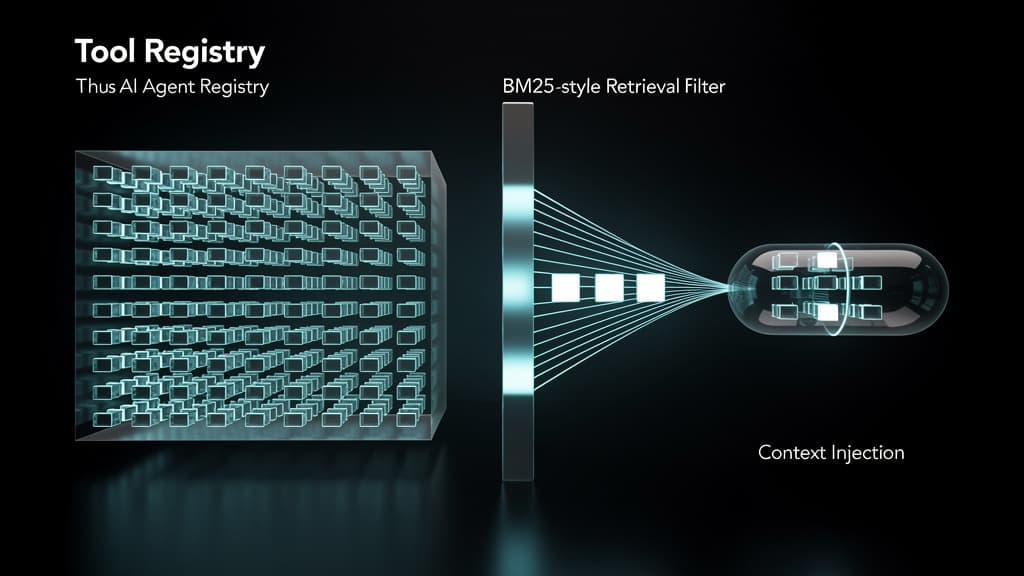

- Sparse autoencoders (SAEs): Decompose activation vectors into sparse combinations of interpretable features. Anthropic's own prior work on SAEs, published in 2023 and 2024, demonstrated that individual neurons often encode polysemantic concepts — a single neuron might activate for both "banana" and "yellow" and "tropical." SAEs helped disentangle these, but the resulting features were still represented as numerical indices, not language.

- Attention visualization: Map which input tokens receive high attention weights. Widely used but consistently shown to be an incomplete proxy for model reasoning.

Each of these methods produces outputs that require expert interpretation. A security analyst without deep ML expertise cannot look at a sparse feature activation pattern and draw actionable conclusions. The gap between "mechanistic finding" and "operational security decision" has remained wide.

The core enterprise AI security risk isn't that models behave badly in demos — it's that when they behave badly in production, no one can reconstruct why.

What Natural Language Autoencoders Actually Do

According to Anthropic's newly released research, natural language autoencoders extend the sparse autoencoder paradigm by replacing numerical feature representations with natural language descriptions. The architecture learns to encode activation patterns not into latent numerical indices but into token sequences — effectively writing a brief explanation of what the model is internally representing at a given layer, for a given input.

The pipeline works roughly as follows:

- Encoder pass: An input prompt is fed through Claude. At a target layer, the activation vector is captured.

- Autoencoder translation: The natural language autoencoder maps that activation vector to a compact natural language description — for example, "the model is representing a legal liability context with adversarial framing" or "this activation encodes a request for step-by-step technical instructions."

- Decoder reconstruction: The system can optionally reconstruct an approximation of the original activation from the natural language description, enabling fidelity checks on how much semantic content was preserved in the translation.

- Audit output: The natural language descriptions are logged, queryable, and human-readable without requiring the auditor to understand the underlying vector mathematics.

This architecture addresses the expert-gap problem directly. A compliance officer, a red team analyst, or a product safety reviewer can read activation explanations the same way they read a log file.

The Reconstruction Fidelity Question

The most technically significant aspect of this approach is the decoder-side reconstruction. Prior interpretability methods often operated in one direction: compress or project activations into something human-readable, with no way to verify how much information was lost in translation. By maintaining a reconstruction pathway, natural language autoencoders enable a measurable fidelity metric: how well does the natural language description, when re-encoded, approximate the original activation?

High reconstruction fidelity means the natural language description is a genuinely informative summary of the activation, not a lossy projection that discards the safety-relevant signal. Low fidelity at specific layers or input types would itself be a diagnostic signal — indicating that the model's internal representations at those points resist natural language characterization, which is precisely where adversarial inputs often operate.

Enterprise Security Implications: Four Concrete Use Cases

1. Real-Time Jailbreak Detection

Current jailbreak detection relies almost exclusively on output filtering: scan the model's response for prohibited content and block it. This is reactive by design. By the time the filter triggers, the model has already completed the reasoning chain that produced the harmful output.

Natural language autoencoder outputs enable a fundamentally different approach: inspect the activation state mid-generation, before the output is produced. If the activation explanation at layer N reads "model is representing a context of bypassing safety constraints via fictional framing," a safety layer can intervene before the completion is returned. This shifts jailbreak defense from reactive filtering to proactive reasoning-chain inspection.

The practical barrier here is latency — running an autoencoder pass mid-generation adds computational overhead. But for high-stakes enterprise deployments (legal, financial, medical), that tradeoff is likely acceptable.

2. Audit Trails for Regulated Industries

In regulated sectors — financial services under MiFID II or SEC AI guidance, healthcare under HIPAA-adjacent AI governance frameworks, or defense contractors subject to NIST AI RMF requirements — organizations face increasing pressure to document not just what an AI system decided, but the reasoning basis for that decision.

Natural language activation logs could serve as machine-generated audit trails: timestamped, queryable records of what internal representations Claude was operating from when it produced a given output. This is qualitatively different from logging prompts and completions. It captures the intermediate reasoning state, which is where subtle misalignment or unintended generalization typically manifests.

3. Red Team Efficiency

AI red teaming is currently labor-intensive and coverage-limited. Human red teamers craft adversarial inputs, observe outputs, and infer from behavioral patterns whether the model has an exploitable reasoning vulnerability. This inference step — from observed behavior back to internal mechanism — is where most red team effort is spent and where coverage gaps emerge.

With natural language autoencoder access, red teamers can directly inspect activation states across a sweep of adversarial inputs, clustering by activation description rather than by output behavior. This enables discovery of mechanistically similar attack vectors that produce superficially different outputs — a class of vulnerability that behavioral red teaming systematically misses.

4. Model Drift and Degradation Monitoring

Fine-tuned enterprise models drift. Continued training, RLHF updates, and domain adaptation can shift internal representations in ways that aren't immediately visible in benchmark performance but manifest as changed reasoning patterns on edge cases. Natural language activation monitoring provides a new signal for detecting this drift: if the activation descriptions for a standard test suite begin shifting in character — becoming more uncertain, more adversarially framed, or encoding different contextual assumptions — that's an early warning before behavioral degradation becomes visible in outputs.

Limitations and Open Questions

This is a genuine breakthrough, but it is not a complete solution to enterprise AI security risks. Several significant limitations deserve direct acknowledgment.

Coverage depth: Natural language autoencoders, as currently described, operate on specific layers and activation types. A model's full reasoning chain spans hundreds of layers and multiple attention mechanisms. Achieving comprehensive coverage — where every safety-relevant intermediate state is captured and described — remains an unsolved engineering challenge.

Adversarial robustness of the autoencoder itself: If natural language autoencoders become a standard safety layer, they become an attack surface. A sufficiently sophisticated adversarial input might be crafted to produce activation patterns that the autoencoder describes benignly while the underlying representation encodes harmful intent. This is an analog of adversarial examples for the interpretability layer itself, and it has not been systematically studied.

Semantic precision at scale: Natural language descriptions are inherently lossy and ambiguous. "The model is representing a context of bypassing safety constraints" could describe a legitimate security research query or a genuine jailbreak attempt. At enterprise scale, the false positive rate of activation-based flagging will need careful calibration to avoid alert fatigue.

Computational overhead: Running autoencoder passes in production — especially mid-generation for real-time intervention — adds latency and cost. For latency-sensitive applications, this may limit deployment to asynchronous audit modes rather than real-time intervention.

Where This Fits in the Interpretability Landscape

Anthropologic's natural language autoencoder work builds on a research trajectory that has accelerated significantly since 2023. The progression from attention visualization → probing classifiers → sparse autoencoders → natural language autoencoders represents each step closing the gap between mechanistic finding and operational usability.

The remaining gap is significant but now measurable. The question is no longer "can we understand anything about model internals" but "can we achieve sufficient coverage, fidelity, and operational integration to make interpretability a load-bearing component of enterprise AI security architecture."

For security teams evaluating AI deployments today, the practical implication is this: natural language autoencoders are not yet a plug-in security product, but they establish the technical foundation for one. Organizations that begin building interpretability infrastructure now — logging activation states, developing internal expertise in reading activation descriptions, piloting red team workflows that incorporate mechanistic inspection — will be materially better positioned as this tooling matures.

The black box problem is not solved. But for the first time, the box has a window.

Sources

- Anthropic Introduces Natural Language Autoencoders — MarkTechPost

- Anthropic Sparse Autoencoder Research, 2023–2024 (Anthropic Research Blog)

- NIST AI Risk Management Framework 1.0: https://www.nist.gov/system/files/documents/2023/01/26/AI%20RMF%201.0.pdf

- Elhage et al., "Toy Models of Superposition," Anthropic, 2022: https://transformer-circuits.pub/2022/toy_model/index.html

- EU AI Act Technical Requirements for High-Risk Systems: https://artificialintelligenceact.eu/

Last reviewed: May 08, 2026