Microsoft GitHub Breach: A Wake-Up Call for Enterprise AI

Microsoft's recent repository breach exposed dangerous vulnerabilities in AI developer toolchains. Discover the three security gaps every enterprise must address.

An AI-focused content and services platform, headquartered in New York.

Microsoft's recent repository breach exposed dangerous vulnerabilities in AI developer toolchains. Discover the three security gaps every enterprise must address.

We architect intelligent systems that understand, adapt, and elevate every interaction with your brand.

From keyword strategy to published articles — an automated content pipeline that drives organic traffic while you focus on your business.

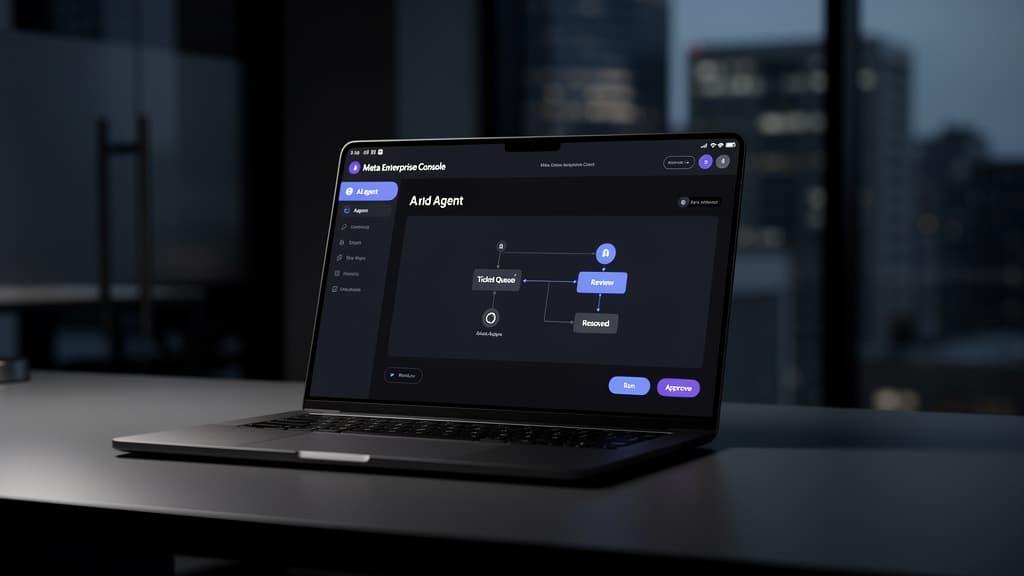

More than Q&A — AI assistants that take actions, resolve issues, and work across every channel your customers use.

Not sure where to start with AI? We assess your business, identify high-impact opportunities, and build a practical implementation roadmap.

Let us discuss how our AI solutions can elevate your business experience.

Get in Touch