Multi-token prediction is transforming production inference. Learn how to implement Gemma 4 drafters to achieve 3x faster text generation without quality loss.

What Multi-Token Prediction Actually Solves

Multi-token prediction (MTP) is a technique that allows a large language model to generate more than one token per forward pass — or, in the speculative decoding variant Google has deployed for Gemma 4, to use a small auxiliary "drafter" model to propose multiple tokens simultaneously while the main model validates them in a single parallel pass. The result: up to 3x faster text generation with no measurable quality loss.

If you're running large language model (LLM) deployment at scale, this matters immediately. Autoregressive generation — the standard approach where every token requires a full forward pass through the entire model — is the single biggest throughput bottleneck in production inference. MTP with speculative decoding attacks that bottleneck directly, and Google's release of MTP drafters for Gemma 4 gives engineering teams a concrete, production-ready implementation to work from.

This tutorial walks through the mechanics of how Gemma 4's MTP works, what speculative decoding actually does under the hood, and three concrete implementation paths your team can take to achieve those 3x inference gains.

Prerequisites

Before diving in, you should be comfortable with:

- Basic transformer inference concepts (forward passes, KV cache, autoregressive decoding)

- Python and familiarity with HuggingFace Transformers or a similar inference framework

- Access to Gemma 4 model weights (available via Google's model hub)

- A GPU environment capable of running Gemma 4 (at minimum, an A100 or H100 for the full model; smaller quantized variants can run on consumer-grade hardware)

By the end of this tutorial, you'll understand how to configure Gemma 4's MTP drafters, integrate speculative decoding into your inference pipeline, and tune the key parameters that determine whether you hit 2x or the full 3x speedup.

How Gemma 4's MTP Architecture Works

The Core Bottleneck: One Token at a Time

Standard autoregressive LLM inference is inherently sequential. To generate a 100-token response, the model performs 100 separate forward passes. Each pass is compute-bound and memory-bandwidth-bound — the model loads its full parameter set (or the relevant KV cache) for every single token. At scale, this translates directly into high latency and low throughput.

The fundamental problem: a 70B parameter model generating 500 tokens requires 500 full forward passes. Each pass reads tens of gigabytes of weights. This is why inference costs dominate LLM deployment budgets.

The Drafter-Verifier Pattern

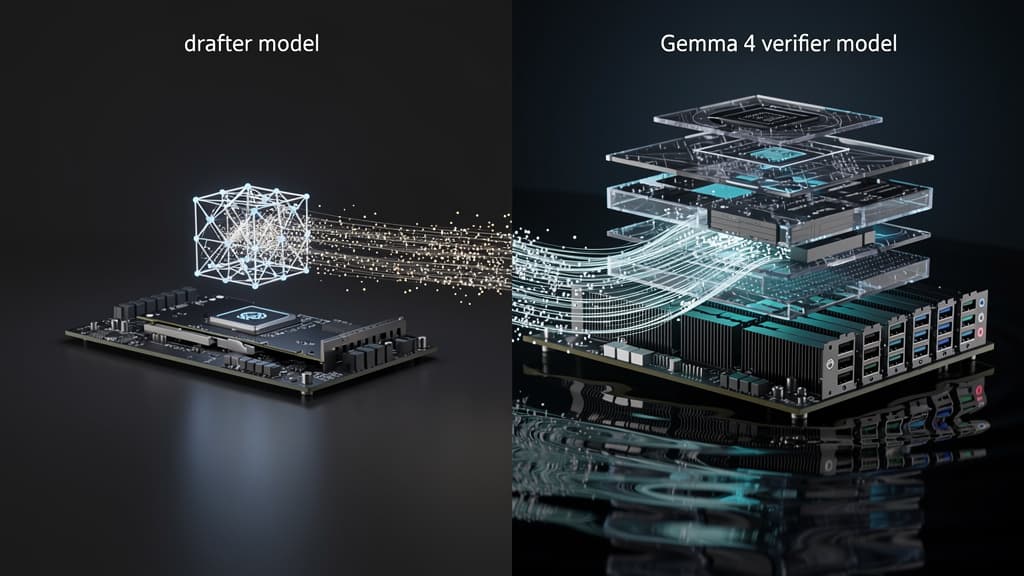

Speculative decoding sidesteps this by splitting the job between two models:

- The drafter — a small, fast auxiliary model that proposes a sequence of k candidate tokens (typically 4–8 tokens ahead)

- The verifier — the full Gemma 4 model, which evaluates all k proposed tokens in a single parallel forward pass

Because transformer attention is parallelizable across sequence positions, the verifier can check whether the drafter's proposals are consistent with its own distribution in one shot. If the drafter is right (or close enough), you get k tokens for the cost of roughly one forward pass. If the drafter is wrong on token i, you reject from that point, take the verifier's correction, and restart — but you've still saved the cost of passes 1 through i-1.

According to reporting from The Decoder, Google's implementation achieves this without any modification to the base Gemma 4 model's weights or output distribution — the quality of the generated text is statistically identical to standard autoregressive decoding.

What Makes Gemma 4's MTP Drafters Different

Google trained dedicated MTP drafter models specifically paired with Gemma 4. These aren't generic small models — they're distilled to match Gemma 4's token distribution as closely as possible, which is what drives the high acceptance rate (the fraction of drafter tokens the verifier accepts). A higher acceptance rate means fewer rejected sequences and closer to the theoretical maximum speedup.

As MarkTechPost's coverage details, the drafter models are released alongside Gemma 4 and are designed to run on the same hardware stack, keeping deployment complexity manageable.

Way 1 — Drop-In Speculative Decoding with HuggingFace

The fastest path to MTP gains if you're already in the HuggingFace ecosystem.

Step 1: Load the Drafter and Main Model

python from transformers import AutoModelForCausalLM, AutoTokenizer

Load the Gemma 4 verifier (main model)

verifier = AutoModelForCausalLM.from_pretrained( "google/gemma-4-27b", device_map="auto", torch_dtype="auto" )

Load the paired MTP drafter

drafter = AutoModelForCausalLM.from_pretrained( "google/gemma-4-mtp-drafter", device_map="auto", torch_dtype="auto" )

tokenizer = AutoTokenizer.from_pretrained("google/gemma-4-27b")

Step 2: Configure Speculative Decoding

HuggingFace's generate() method supports speculative decoding natively via the assistant_model parameter:

python inputs = tokenizer("Explain the architecture of a transformer model:", return_tensors="pt").to("cuda")

outputs = verifier.generate( **inputs, assistant_model=drafter, max_new_tokens=512, do_sample=False # greedy decoding; set True for sampling with temperature )

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

Step 3: Tune the Lookahead Length

The number of tokens the drafter proposes per step (num_assistant_tokens) is the primary knob:

python outputs = verifier.generate( **inputs, assistant_model=drafter, max_new_tokens=512, num_assistant_tokens=5, # start at 4-6; tune based on your workload do_sample=False )

Tuning guidance: For factual, low-entropy outputs (code, structured data), the drafter acceptance rate is high — push num_assistant_tokens to 6–8. For creative or high-temperature sampling tasks, acceptance rates drop; 3–4 is safer. Profile your specific workload before committing to a value.

Way 2 — High-Throughput Batch Inference with vLLM

If you're serving Gemma 4 under real traffic with concurrent requests, HuggingFace's generate() isn't your bottleneck solution — you need a production inference server. vLLM supports speculative decoding and is the recommended path for teams running LLM deployment at scale.

Step 1: Launch the vLLM Server with MTP

bash

python -m vllm.entrypoints.openai.api_server

--model google/gemma-4-27b

--speculative-model google/gemma-4-mtp-drafter

--num-speculative-tokens 5

--tensor-parallel-size 2

--gpu-memory-utilization 0.90

Key flags:

--speculative-model: points to the MTP drafter--num-speculative-tokens: the lookahead depth (equivalent tonum_assistant_tokensabove)--tensor-parallel-size: shard the verifier across GPUs; the drafter runs on a single GPU by default

Step 2: Send Requests via the OpenAI-Compatible API

python import openai

client = openai.OpenAI(base_url="http://localhost:8000/v1", api_key="none")

response = client.chat.completions.create( model="google/gemma-4-27b", messages=[{"role": "user", "content": "Summarize the key risks of speculative decoding in production."}], max_tokens=300 )

print(response.choices[0].message.content)

Step 3: Monitor Acceptance Rate Metrics

vLLM exposes a Prometheus metrics endpoint. The metric to watch is vllm:spec_decode_draft_acceptance_rate. A healthy acceptance rate for a well-matched drafter like Gemma 4's MTP pair should be above 0.75 for typical instruction-following tasks. If you're seeing below 0.6, reduce --num-speculative-tokens or investigate whether your input distribution differs significantly from the drafter's training distribution.

bash curl http://localhost:8000/metrics | grep spec_decode

Way 3 — Custom Integration with Token Budget Controls

For teams building proprietary inference stacks — or those who need fine-grained control over cost versus latency tradeoffs — implementing speculative decoding at the loop level gives you the most flexibility.

The Core Verification Loop

python import torch

def speculative_generate(verifier, drafter, input_ids, max_new_tokens=256, k=5): generated = input_ids.clone()

while generated.shape[1] - input_ids.shape[1] < max_new_tokens:

# Step 1: Drafter proposes k tokens

with torch.no_grad():

draft_output = drafter.generate(

generated,

max_new_tokens=k,

do_sample=False

)

draft_tokens = draft_output[:, generated.shape[1]:]

# Step 2: Verifier scores the full candidate sequence in one pass

candidate = torch.cat([generated, draft_tokens], dim=1)

with torch.no_grad():

verifier_logits = verifier(candidate).logits

# Step 3: Token-level acceptance check

accept_length = 0

for i in range(draft_tokens.shape[1]):

verifier_token = verifier_logits[:, generated.shape[1] + i - 1, :].argmax(dim=-1)

if verifier_token.item() == draft_tokens[:, i].item():

accept_length += 1

else:

break

# Step 4: Append accepted tokens + one verifier correction

accepted = draft_tokens[:, :accept_length]

correction = verifier_logits[:, generated.shape[1] + accept_length - 1, :].argmax(dim=-1, keepdim=True)

generated = torch.cat([generated, accepted, correction], dim=1)

return generated

This stripped-down loop illustrates the core mechanics. Production implementations add temperature-based stochastic acceptance (the full algorithm from Leviathan et al., 2023), KV cache management for both models, and early stopping on EOS tokens.

When to Use This Approach

- You're integrating Gemma 4 into a custom serving framework (Triton Inference Server, TorchServe, etc.)

- You need to implement token budget controls — for example, capping drafter lookahead dynamically based on current GPU memory pressure

- You want to A/B test different drafter models or lookahead depths without changing your serving infrastructure

Understanding the 3x Number: What Determines Your Actual Speedup

The 3x figure is real but conditional. Your actual speedup depends on three factors:

| Factor | Impact on Speedup | Optimization |

|---|---|---|

| Drafter acceptance rate | Highest | Use the matched Gemma 4 MTP drafter; don't substitute generic small models |

| Lookahead depth (k) | High | Tune per workload; too high causes more rejections |

| Batch size | Moderate | Speculative decoding benefits shrink at large batch sizes; best for latency-sensitive, low-batch workloads |

| Hardware memory bandwidth | Moderate | MTP gains are largest when memory bandwidth is the binding constraint |

Key insight: Speculative decoding optimizes latency more than throughput. For high-concurrency batch workloads, the gains are smaller. For real-time, interactive LLM deployment — chatbots, copilots, code assistants — the 3x improvement is achievable and transformative.

What to Watch Next

Google's MTP drafter release for Gemma 4 is a signal, not an endpoint. The broader pattern — training small models specifically to match the token distribution of large verifier models — is being applied across the industry. Meta's research into MTP as a training objective (rather than purely an inference technique) suggests future model releases may have speculative decoding capability baked in from pretraining, further raising acceptance rates and pushing speedups beyond 3x.

For teams running production LLM deployments today, the practical takeaway is straightforward: the infrastructure to achieve 3x faster inference on Gemma 4 exists, is open, and requires configuration rather than novel engineering. The three paths above — HuggingFace for prototyping, vLLM for production serving, and custom loops for specialized stacks — cover the majority of real-world deployment scenarios.

Start with the vLLM path if you're already serving traffic. Instrument the acceptance rate metric from day one. And treat the 3x figure as a ceiling to tune toward, not a guarantee — your workload's entropy is the variable that matters most.

Sources

- Google AI Releases MTP Drafters for Gemma 4 — MarkTechPost

- Google Speeds Up Gemma 4 Threefold with Multi-Token Prediction — The Decoder

- Fast Inference from Transformers via Speculative Decoding — Leviathan et al., 2023

Last reviewed: May 07, 2026