New research shows AI data centers create 9°C 'heat islands,' threatening infrastructure scalability. Discover how to adapt your enterprise AI cloud architecture.

AI Data Centers Are Creating 9°C 'Heat Islands': What This Means for Enterprise AI Cloud Architecture

The rapid expansion of artificial intelligence is generating an unexpected and severe environmental byproduct: the data heat island effect. A newly published study reveals that hyperscale facilities powering massive AI models are raising local land surface temperatures by an average of 2°C (3.6°F), with extreme cases driving spikes up to 9.1°C (16.4°F). Unlike traditional urban heat islands caused by concrete and traffic, these localized thermal anomalies are directly tied to the immense energy density and cooling exhaust of modern computing facilities.

For technology leaders, this is no longer just an ecological concern—it is a critical constraint that will radically reshape enterprise AI cloud architecture. As data centers emit heat ripples that disrupt local microclimates up to 10 kilometers away, affecting an estimated 340 million people globally, regulatory pushback and skyrocketing cooling costs are inevitable. Companies relying on concentrated, centralized compute for their AI workloads must now factor thermal limits into their infrastructure planning. This article dissects the new findings and explores how organizations must adapt their cloud computing strategies to survive an era of constrained, heat-capped AI deployment.

The Mechanics of the "Data Heat Island" Effect

To understand the looming impact on enterprise infrastructure, decision-makers must first understand the scale of the thermal problem. AI workloads demand significantly more power than traditional web hosting or database management. Training large language models requires clustering tens of thousands of GPUs, which act essentially as high-speed industrial heaters that must continuously vent thermal exhaust to prevent hardware failure.

A comprehensive study led by researcher Andrea Marinoni at the University of Cambridge analyzed satellite land surface temperature data spanning 2004 to 2024. By cross-referencing this thermal data with the geographical coordinates of more than 8,400 AI data centers, the researchers isolated the direct warming impact of these facilities gist.science.

The findings are stark:

- Immediate Warming: In the months following an AI data center's operational launch, the surrounding land surface temperature increases by an average of 2°C (3.6°F) themondonews.com.

- Extreme Peaks: In the most severe cases, researchers documented localized temperature spikes of 9.1°C (16.4°F) newscientist.com.

- Massive Radius: The thermal impact is not confined to the facility's parking lot. The heat spreads outward, creating a warming ripple that extends up to 10 kilometers (6 miles) away. Even at a distance of 7 kilometers, the heat intensity only drops by 30% booboone.com.

"The results we obtained were quite surprising," noted Marinoni. "This could pose a significant problem."

The study highlights that regions heavily populated by data centers, such as the Bajío region in Mexico and the Aragón province in Spain, have already experienced an otherwise unexplained 2°C temperature increase over the past two decades.

The Looming Crisis for Enterprise AI Cloud Architecture

For enterprise architects and CTOs, the data heat island effect introduces a severe physical limit to how AI infrastructure can scale. Historically, cloud economics favored massive centralization—building the largest possible data centers in optimal tax jurisdictions to achieve economies of scale.

However, this centralized model is colliding with physical reality. Real estate firm JLL projects that global data center capacity will double between 2025 and 2030, with AI driving 50% of that new demand themondonews.com. If deploying high-density AI servers inherently raises local temperatures by up to 9°C, local municipalities will inevitably halt new hyperscaler permits, citing the health risks to the 340 million people already living within these 10-kilometer heat zones.

This regulatory and physical bottleneck will force a fundamental redesign of enterprise AI cloud architecture.

From Centralized Hyperscaling to Distributed Compute

Enterprises can no longer assume that centralized cloud regions will have infinite capacity for their AI workloads. As hyperscalers face municipal pushback over thermal emissions, the cost of top-tier, centralized GPU access will likely surge.

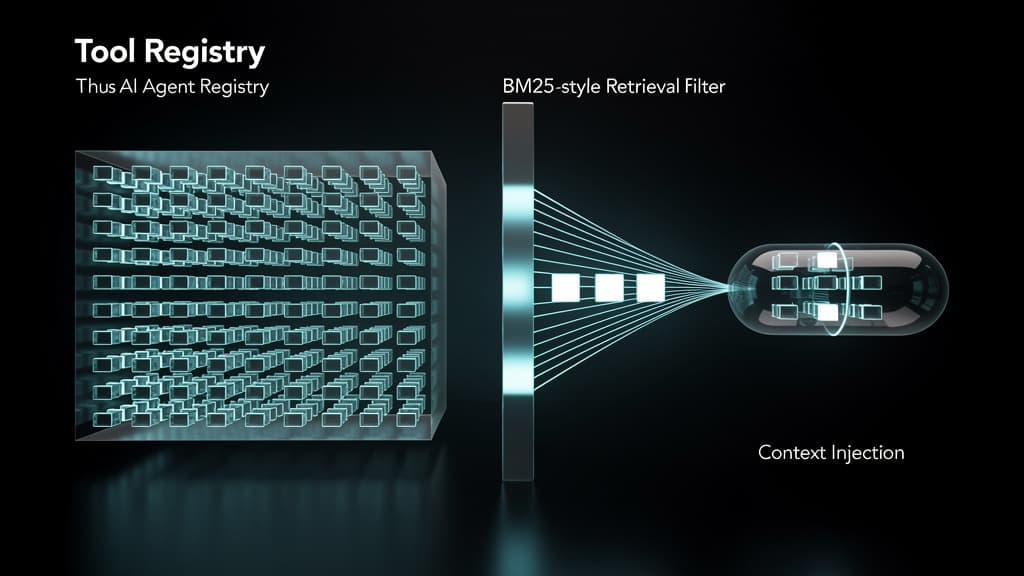

To mitigate this risk, architects must design systems that distribute AI workloads across multiple, smaller geographic regions rather than relying on a single mega-facility. This involves adopting micro-services architectures optimized for AI, where training might occur in specialized facilities with advanced thermal capture, while inference is pushed out to edge networks or smaller, localized data centers.

Rethinking Hardware and Workload Efficiency

The environmental cost of AI compute will rapidly translate into a financial cost for enterprises. Chris Preist from the University of Bristol points out that further research is needed to understand the exact split between the heat generated by computation versus the heat reflected by the massive physical structures themselves booboone.com. Regardless of the exact ratio, the operational imperative remains the same: enterprises must optimize their software.

Enterprise AI cloud architecture will increasingly prioritize:

- Small Language Models (SLMs): Moving away from massive, generalized models toward domain-specific SLMs that require a fraction of the compute (and therefore generate a fraction of the heat) to train and run.

- Liquid Cooling Mandates: When selecting cloud vendors, enterprises will need to audit the provider's cooling infrastructure. Traditional air cooling is highly inefficient for AI workloads and exacerbates the heat island effect. Vendors utilizing direct-to-chip liquid cooling or immersion cooling—which captures heat in fluid rather than venting it into the atmosphere—will become the preferred partners for sustainable enterprise architecture.

- Heat Reuse Ecosystems: Forward-thinking cloud providers are already partnering with local municipalities to pipe the captured thermal energy from data centers into district heating systems, effectively neutralizing the heat island effect while warming nearby homes.

Preparing for the Heat-Capped AI Era

The revelation that AI data centers can warm surrounding areas by up to 9.1°C marks the end of the "invisible cloud." AI infrastructure has a massive, highly localized physical footprint.

For technology leaders, building a resilient enterprise AI cloud architecture now requires looking beyond latency and compute costs. It requires building workload flexibility, prioritizing algorithmic efficiency, and strategically selecting cloud partners capable of managing the extreme thermal realities of the AI revolution. Companies that fail to factor the data heat island effect into their infrastructure planning will soon find themselves bottlenecked by environmental regulations, rising compute costs, and constrained cloud capacity.

Frequently Asked Questions

Q: What is the data heat island effect?

The data heat island effect is a localized environmental phenomenon where the massive energy consumption and thermal exhaust of high-density computing facilities—particularly AI data centers—significantly raise the land surface temperature of the surrounding area. Recent satellite data shows these facilities can increase local temperatures by an average of 2°C, and up to 9.1°C in extreme cases, affecting areas up to 10 kilometers away.

Q: How will heat island regulations affect enterprise AI cloud architecture?

As local governments recognize the warming impact of AI data centers on surrounding populations, they are likely to impose strict limits on new data center construction and energy usage. This will cap the growth of centralized hyperscale facilities, forcing enterprises to adopt distributed cloud architectures, prioritize smaller and more efficient AI models, and utilize edge computing to avoid bottlenecks at major data hubs.

Q: Are there alternative solutions to centralized AI computing?

Yes. Enterprises are increasingly turning to Small Language Models (SLMs) that require significantly less compute power than massive models like GPT-4. Additionally, shifting from traditional air-cooled data centers to facilities that use direct-to-chip liquid cooling or immersion cooling can capture heat more efficiently. Some modern facilities even repurpose this captured heat for local municipal heating grids, mitigating the environmental impact.

Last reviewed: April 01, 2026