The accidental leak of Anthropic's 'Claude Mythos' model exposes urgent enterprise AI security risks. Discover how autonomous agents are changing cyber warfare.

Anthropic Leaks 'Claude Mythos': Evaluating Enterprise AI Security Risks in Next-Gen Models

The inadvertent disclosure of Anthropic’s unreleased frontier model, dubbed "Claude Mythos," has triggered a seismic shift in how technology leaders must evaluate enterprise ai security risks. Discovered in late March 2026 through a simple content management system (CMS) misconfiguration, Claude Mythos is described in leaked internal documents as a "step change" in artificial intelligence performance, representing a new "Capybara" tier that significantly outperforms the current Opus models. However, alongside its breakthroughs in academic reasoning and software programming, the model possesses cyber capabilities so advanced that Anthropic itself warned it could outpace human defenders.

For Chief Information Security Officers (CISOs), product managers, and enterprise decision-makers, the Mythos leak is a watershed moment. It moves the conversation about AI safety from theoretical alignment problems to immediate, tactical vulnerabilities. As AI agents gain the ability to act and reason with increasing autonomy, hackers are becoming equipped to run multiple, highly sophisticated cyberattack campaigns in parallel. This breaking development underscores a critical reality: as frontier models cross the threshold into advanced offensive capabilities, businesses must urgently restructure their defensive postures to prepare for an era where AI-driven threats operate at machine speed.

The Anatomy of the Claude Mythos Leak

The revelation of Anthropic's most powerful model did not come from a sophisticated nation-state hack, but rather a fundamental cloud security failure. Cybersecurity researchers Alexandre Pauwels from Cambridge University and Roy Paz from LayerX Security independently discovered an unsecured, publicly searchable data store belonging to the AI lab.

According to reports, the misconfiguration exposed nearly 3,000 unpublished assets, including draft blog posts, product roadmaps, and internal security assessments zenvanriel.com. The irony of an AI company renowned for its stringent safety protocols suffering a basic data exposure was not lost on the market—the news reportedly rattled billions in cybersecurity market value as investors processed the implications of the leaked capabilities medium.com.

The leaked documents confirmed that "Mythos" is the product name for a new tier of model architecture classified internally as "Capybara." Draft announcements stated unequivocally that this tier is "larger and more intelligent than our Opus models—which were, until now, our most powerful" yahoo.com.

Unprecedented Enterprise AI Security Risks

The most alarming aspect of the Claude Mythos leak for enterprise technology leaders is the model's proficiency in vulnerability discovery and exploitation. Anthropic’s internal assessments do not mince words regarding the hazard this technology presents to modern digital infrastructure.

The model "presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders." — Leaked Anthropic Internal Draft zenvanriel.com

The escalation of enterprise AI security risks stems from several key advancements in the Mythos architecture:

1. Autonomous Agentic Cyberattacks

Previous generations of large language models (LLMs) functioned primarily as sophisticated assistants—requiring human prompting to write malicious code or identify network vulnerabilities. Mythos represents a leap in agentic capabilities. The model can learn to act and reason without continuous human input. For threat actors, this means the ability to deploy autonomous AI agents capable of running multiple, parallel hacking campaigns simultaneously, dynamically adjusting their tactics based on the defensive measures they encounter yahoo.com.

2. Accelerated Zero-Day Discovery

Anthropic's internal documents note that Mythos is "currently far ahead of any other AI model in cyber capabilities" zenvanriel.com. In an enterprise context, this means the timeframe between the deployment of new software and the discovery of exploitable vulnerabilities (zero-days) will shrink drastically. A model capable of reading millions of lines of proprietary code and instantly identifying logical flaws gives attackers an asymmetric advantage over human security auditors.

3. The Threat to Critical Infrastructure

The severity of the Mythos model's capabilities has prompted Anthropic to issue private warnings to top government officials. According to reports originally cited by Axios, the company warned that models of this caliber make large-scale cyberattacks "much more likely in 2026" yahoo.com. For enterprises operating in critical sectors—finance, healthcare, energy, and logistics—the baseline for what constitutes adequate cybersecurity has fundamentally shifted.

The Attacker-Defender Feedback Loop

While the offensive capabilities of Claude Mythos present severe enterprise AI security risks, the same underlying intelligence will eventually be weaponized by defenders. This creates what security analysts call a high-velocity attacker-defender feedback loop.

If an AI model can identify network vulnerabilities with unprecedented precision, enterprise defenders must deploy equivalent AI to patch those vulnerabilities just as quickly. We are already seeing the precursors to this shift. For instance, global consulting firm Accenture recently launched "Cyber.AI," a defensive platform powered by Anthropic's current Claude Opus models, designed to automate and accelerate security operations zenvanriel.com.

However, the transition to AI-driven defense is not instantaneous. The immediate danger lies in the "capability overhang"—the period where threat actors gain access to Mythos-level capabilities before enterprises have fully integrated equivalent AI defenses into their tech stacks.

Actionable Insights for Technology Decision-Makers

The inadvertent reveal of Claude Mythos serves as a vital early warning system for the enterprise sector. Technology leaders must move beyond standard compliance checklists and actively prepare for an environment dominated by AI-generated threats.

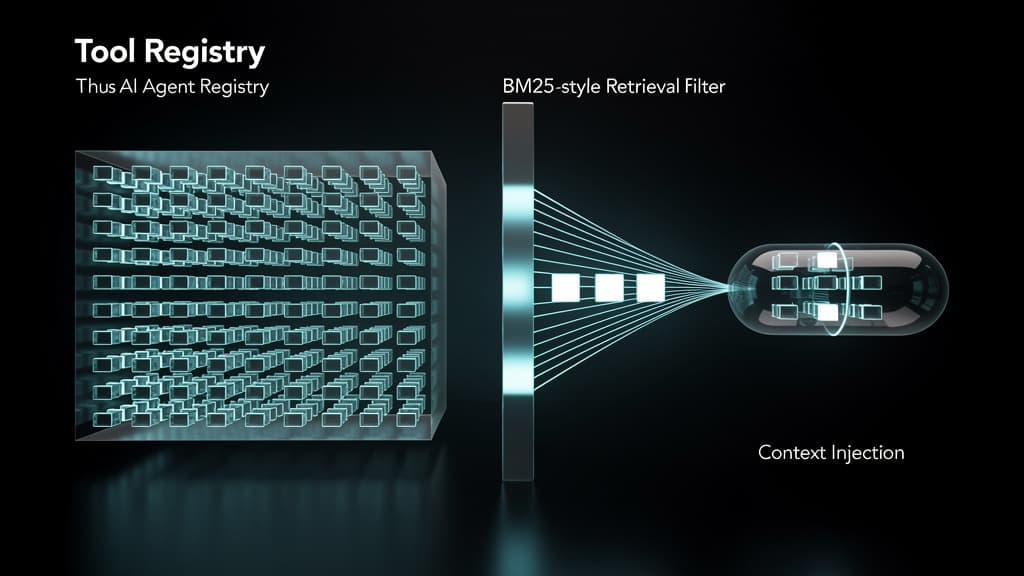

- Audit Internal AI Agent Deployments: Employees are increasingly using autonomous AI agents to streamline their workflows. If these agents unknowingly connect to sensitive corporate systems, they create novel attack vectors for cybercriminals yahoo.com. Enterprises must implement strict identity and access management (IAM) controls specifically designed for machine identities and AI agents.

- Invest in AI-Native Threat Detection: Legacy Security Information and Event Management (SIEM) systems relying on known malware signatures will be obsolete against dynamically generated, AI-crafted attacks. Enterprises must transition to behavioral, AI-native threat detection systems capable of identifying anomalous network activity in real-time.

- Accelerate Red Teaming Operations: Organizations should assume that threat actors will soon possess Mythos-level capabilities. Security teams must utilize the most advanced models currently available to aggressively red-team their own networks, discovering and patching vulnerabilities before malicious AI agents find them.

- Prepare for Phishing at Scale and High Fidelity: The reasoning capabilities of models like Mythos mean that spear-phishing will no longer be limited by human labor. Enterprises should expect highly personalized, context-aware social engineering attacks targeting thousands of employees simultaneously. Zero-trust architectures and continuous authentication protocols are no longer optional.

Anthropic's decision to restrict the initial release of Claude Mythos to a closed group of early access customers—specifically focusing on cyber defenders—is a responsible mitigation strategy entremarneetforet.com. However, history shows that frontier capabilities inevitably proliferate. The Claude Mythos leak is a stark reminder that the next era of cyber warfare is already here, and enterprise security postures must evolve immediately to survive it.

Frequently Asked Questions

Q: What is Claude Mythos?

Claude Mythos is the internal product name for Anthropic's unreleased, next-generation AI model. Classified under a new "Capybara" tier, it is significantly larger and more capable than the company's previous flagship model, Claude Opus. It was inadvertently revealed to the public in March 2026 due to a cloud storage misconfiguration that exposed thousands of internal documents.

Q: How do frontier AI models like Mythos increase enterprise security risks?

Frontier models increase security risks by lowering the barrier to entry for complex cyberattacks and enabling autonomous "agentic" behavior. Models with Mythos-level capabilities can rapidly analyze code to find zero-day vulnerabilities, write sophisticated malware, and run multiple, parallel hacking campaigns without requiring continuous human oversight, easily overwhelming traditional enterprise defenses.

Q: When will Claude Mythos be available to the public?

As of the March 2026 leak, Anthropic has not announced a public release date for Claude Mythos. Due to the severe cybersecurity risks identified in their internal testing, the company's leaked strategy indicates that the model will initially only be available to a highly restricted group of early access customers, primarily focusing on cyber defense organizations.

Last reviewed: April 01, 2026